Why SOPs are not enough to run scientific workflows

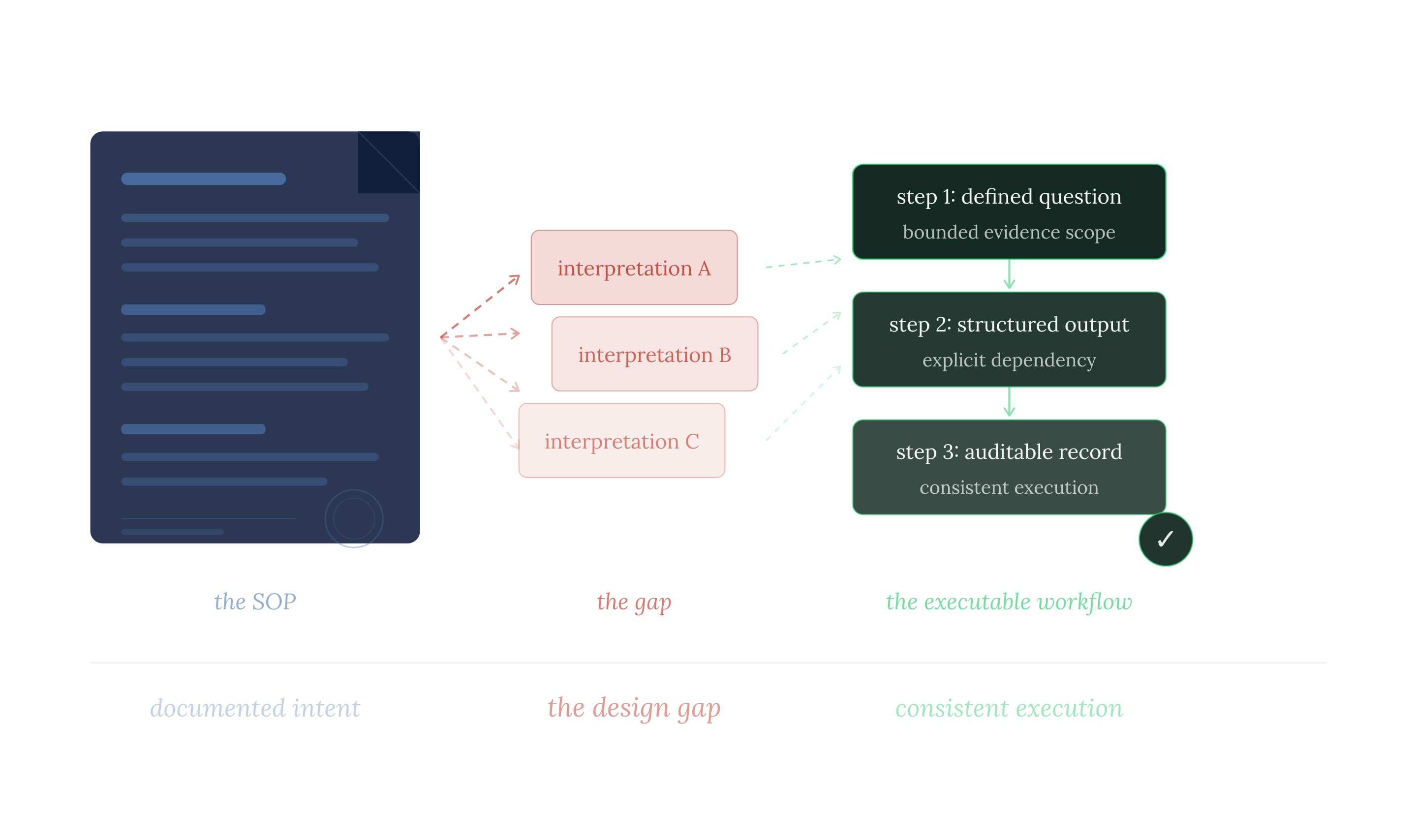

The gap between a documented SOP and an executable workflow is a gap in design, not in effort or rigour.

Standard Operating Procedures (SOPs) describe what a research process is intended to accomplish, but they do not govern how it is actually carried out. The gap between intent and execution is where inconsistency emerges, through undocumented judgment calls, informal handoffs, and steps that rely on unstated knowledge.

When an SOP depends on the author’s tacit understanding to function correctly, it introduces a single point of failure. Execution becomes contingent on individual interpretation rather than a shared, reproducible standard.

Most life sciences organizations treat SOPs as the foundation of process consistency. That foundation is structurally limited because it is a mismatch between what SOPs are designed to capture and what consistent execution requires.

What does an SOP actually do and what does it not do?

An SOP defines the sequence of a process and the standards it is expected to meet. That is its purpose, and it serves it well. What it does not define, is the reasoning within each step: the question being answered, the scope of evidence to consider, how one step’s findings should shape the next, or what form the output must take to be usable downstream.

These are the decisions that determine whether a process produces consistent results. SOPs leave all of them to the scientist judgment during execution, where subjective bias becomes part of the process.

Consider a research team with a comprehensive SOP for target prioritization, reviewed quarterly and approved by senior scientists. Two analysts run the same process and produce materially different shortlists. Neither deviated from the SOP. Both followed the sequence and applied the stated criteria. The divergence is the expected outcome of a procedure that defines what to do but not how to interpret what is found.

The process is documented, but not executable.

A well-written SOP shows that someone once understood the process. It does not ensure that others can run it the same way.

Why do research processes fail even when SOPs exist?

The failure modes are specific and they compound. Understanding them is important because each one reveals a design gap that better SOP writing alone cannot fix.

- Step-level question drift.Two scientists interpret the same step differently and search against different scopes. The SOP defines the step, but not the exact question it is meant to answer. Without that precision, individual interpretation becomes unavoidable and divergence follows as a predictable outcome.

- Informal handoffs. Findings move between steps as emails, slide annotations, or verbal summaries rather than structured outputs. Each handoff introduces interpretation. By step five, the process may be operating on a materially different understanding of what step two actually found.

- Implicit knowledge dependencies. Some steps only work if the scientist already knows something the SOP never states. This is the most insidious failure mode because it remains invisible until the person who holds the implicit knowledge leaves, or is replaced by someone who does not. Making the process appear intact but not running correctly.

- Output format mismatch.

Each step produces prose optimized for human readers not for downstream use. The next scientist must reinterpret that output into something usable. Every reinterpretation introduces another decision point, and with it, another source of variation.

None of these are issues of SOP quality. They are structural limitations of the SOP format when applied to work that requires precise, repeatable execution.

What does a scientific workflow need beyond an SOP?

Organizations that have closed this gap have not done so by writing better SOPs. They recognized that the SOP is the starting point for process design, not the end product. What they added, systematically, is a layer of specification that transforms documented intent into an executable structure. That layer contains these components.

- Task-level question definition. Each step in the workflow specifies the exact question it is answering. Not the objective of the process, not the goal of the project. The precise question that this step, and only this step, is responsible for answering. Without this, two scientists running the same step are not running the same step.

- Explicit dependency structure.The sequence is defined by causality: what step N+1 needs from step N, decided not by convention or historical practice. When the dependency is explicit, a change in what one step finds propagates, to what the next step needs to retrieve. The workflow adapts. A static procedure does not.

- Evidence scope per task. Each step retrieves against its specific question, not the whole project objective. Scope defined at the task level is scope that can be held constant across analysts, projects, and time. Scope left implicit is scope that drifts.

- Structured output format. Each step produces output the next step can use without reinterpretation. Not narrative summaries, but structured records of what was asked, what was retrieved, what met the criteria, and what did not. Outputs are designed for downstream use, not for reading.

- Auditable record. The workflow preserves a complete record of what each step asked, what it found, and how it informed the next. Beyond compliance, this is the mechanism by which the organization accumulates knowledge in a structure it can build on, rather than in prose that resets with every new project.

These are design requirements. They cannot be met by adding detail to an SOP. They require a different kind of artifact entirely.

What does closing the SOP-to-workflow gap require?

The gap between a documented SOP and an executable workflow is a gap in design, not in effort or rigour.

The SOP establishes what the process is supposed to accomplish. The design work is everything that sits between that intent and consistent execution: the decisions about scope, sequence, and output that the SOP leaves to the individual scientist. Most organizations have not made that move yet, because the SOP creates the impression that the structural foundation is already in place. It is not. Recognizing that gap precisely is what makes it possible to close it.

If you want to see how Causaly's handle evidence retrieval, task dependencies, and structured outputs across a real research process, book a demo and one of our scientist would walk you through it.

Further reading

Get started with Causaly

Ready to transform the way your R&D teams discover and deliver? Take the first step - see Causaly for yourself.

Request a demo.png)

.png)

.jpg)

.png)

.png)

.png)

.png)

%20(14).png)